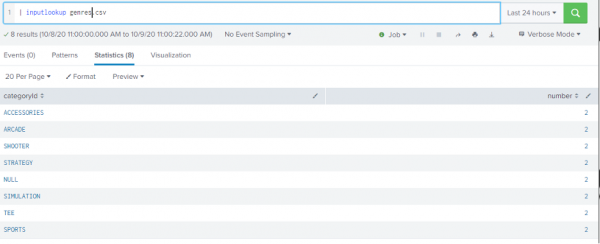

If your lookup has another field say is_bad that has a "1" if a domain is bad. Subsearches have limitations as far as number of rows and execution time, and you'll want to figure out if this makes sense or not. The subsearch would translate your lookup into the query ((Domain="bad.com") OR (Domain="bad.biz"). With your case there are two ways that I can think about this being done offhand, with certain tradeoffs: Assuming you have a lookup defined named baddomains with the field Domain one way to search would be: sourcetype=weblogs outputlookup takes the current event set and writes it to a CSV or KVStore.inputlookup takes the the table of the lookup and creates new events in your result set (either created completely or added to a prior result set).lookup adds data to each existing event in your result set based on a field existing in the event matching a value in the lookup.I guess I am not sure what inputlook vs lookup does and am just looking for a more clear definition.Īny information that anyone can provide to give a basic understanding to a beginner is much appreciated.įor reference: the docs have a page for each command: lookup inputlookup and outputlookup. Also, how would the outlookup command play into this? I am trying to figure out if I could use the "inputlookup" command to search for any hits or if I need to use the "lookup" command, or if I need to use a combination of both. I know I need a common field in my lookup file that matches the sourcetype I am trying to search from, so a correlation can be made.

My badfile.csv contains a field of "Domain" and let's say I am trying to search my "weblogs" sourcetype, and those logs also have the field name of "Domain". I am assuming that you first have to create the actual lookup file, which I have done from a static csv file that contains some malicious domains. I am also trying to get a basic real world example of why one may use one over the other. Use at your own risk.I am having a hard time trying to understand the difference between "lookup", "inputlookup", and "outputlookup". Syncing lookups between your development and production or Enterprise Security and Ad-hoc search heads is no longer a problem! Feel free to install the SA or simply copy and paste the SPL from the macro as needed. This output can then be piped to the outputlookup command and written to a local file.Īutomating this transfer is now as simple as creating a scheduled search. I created a macro with some SPL magic that retrieves the lookup and reformats the contents into a table. If you run this search, you will notice the contents of the lookup are merged into a single value. | rest splunk_server=sh1 /services/search/jobs/export search="| inputlookup demo_assets.csv" output_mode=csv | fields value Using the following search, I could retrieve the contents of the lookup file named “demo_assets.csv” from sh1: I then added SH1 as a search peer to SH2. I setup two search heads in my lab environment, sh1 with a “demo_assets.csv” lookup and sh2 without the lookup. I then realized I could do the same thing using rest command on a search head. I knew I could run a curl command from the operating system, execute any search, and retrieve the contents of a lookup using Splunk’s robust REST API. I then knew the solution, I needed to figure out a way to run the inputlookup command remotely. I began looking at existing REST endpoints and realized there was not one that would retrieve the contents of a lookup file.

I was hoping the inputlookup command allowed for the use of splunk_server, but it didn’t. Knowing that Splunk can search a specific search peer using the splunk_server parameter, I added the source search head to the destination search head. However, I wanted to use pure SPL so this solution could be completely portable, and usable without installing additional apps. Since Splunk is a very open platform, I knew this could be accomplished using a custom REST endpoint. I was working with a customer a couple weeks ago who has several search heads and wanted a way to sync lookup files without relying on third party tools such as rsync. If you have seen my previous post “ Upgrading Linux Forwarders Using the Deployment Server”, you can see that I love figuring out how to do unconventional tasks using Splunk.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed